Reading the Notes Before You Panic

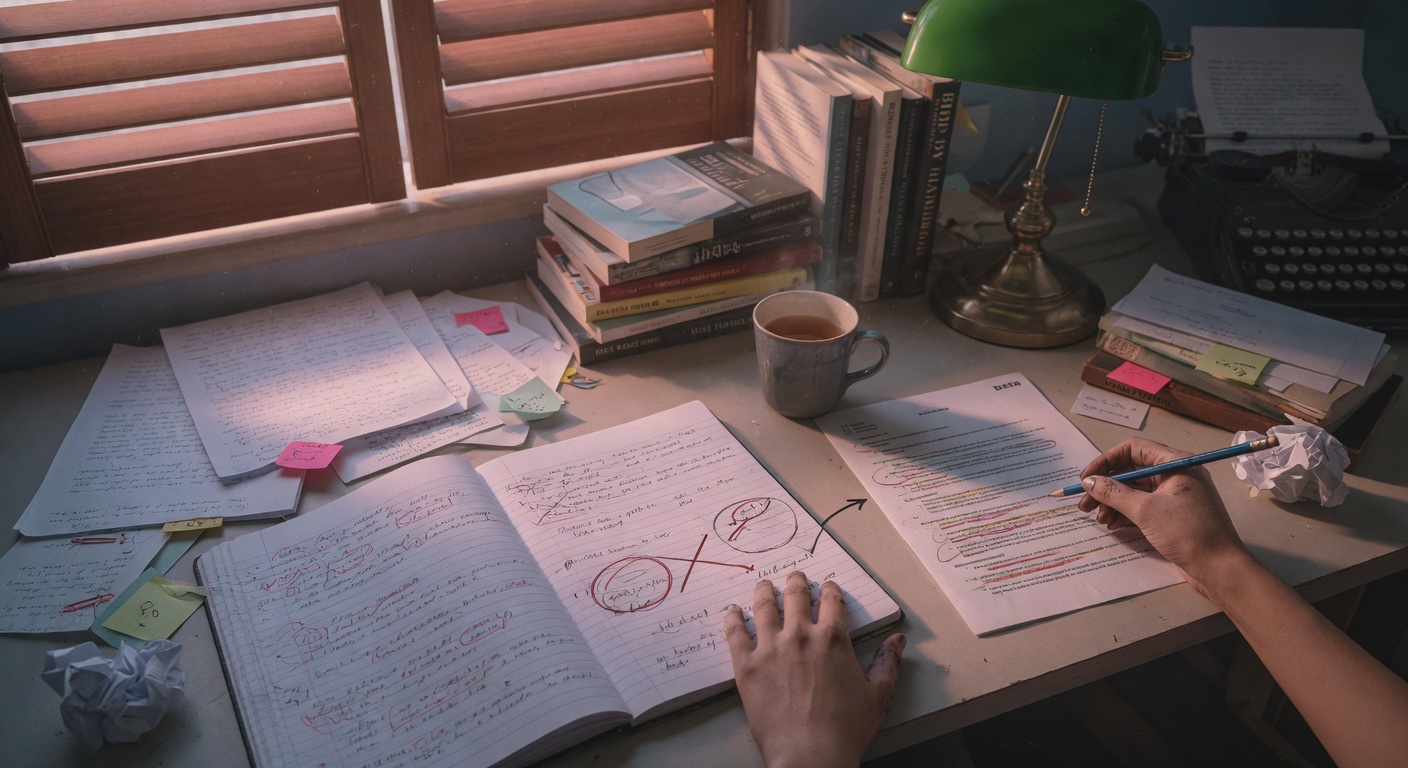

Beta reader feedback arrives in all shapes. Sometimes it's a two-page document with color-coded annotations and timestamps. Sometimes it's a voice memo, a handful of texts, or a handwritten note stuffed into the manuscript you lent someone three months ago. Whatever form it takes, the first thing most writers do is read it too fast, feel something in their chest tighten, and either dismiss every comment or accept all of it wholesale. Neither response serves the work.

The better move is to treat the notes like raw material, not a verdict. Beta readers are generous people who gave you their time and their honest reactions, but they are readers, not editors, not co-authors, and not mind readers. When someone writes "this character feels flat" they're reporting a reading experience, not diagnosing a craft failure. Your job is to translate that experience into a specific, actionable revision task. That translation is where the real work begins, and it's also where AI tools can be genuinely useful, if you use them with some intention.

Before you open a chat window, do one thing manually: read all the notes in a single sitting without touching your manuscript. Get the full picture. Then make a rough list of every comment and sort them into three buckets. The first bucket is structural, meaning anything about plot, pacing, chapter order, or the shape of the whole. The second bucket is local, meaning line-level concerns, dialogue that feels off, a scene that drags, a description that confuses. The third bucket is subjective, meaning the notes that reflect the reader's personal taste more than a craft problem. "I just didn't like the main character" and "this genre isn't really for me" both live in bucket three. You'll check in with those later, but they don't drive your revision plan.

Once you have that rough sorting done, look for patterns. If four readers flagged the same chapter as confusing, that's a signal worth trusting. If one reader wanted more romance and nobody else mentioned it, that's probably a taste issue. Patterns tell you where to spend your energy. Outliers tell you to pause and think before acting. This is the part AI can help you think through, because talking through a problem with a model sometimes clarifies what you actually believe about it.

A quick note on limits before we go further: AI doesn't know your book. It hasn't read your manuscript, doesn't know your character's history, and can't feel the tonal thing you were going for in chapter nine. It's a thinking partner, not a developmental editor. Use it to generate options, pressure-test your ideas, and draft language you can reshape. Don't hand it your revision decisions and walk away. Your judgment stays in the room.

Prompts and Exercises for Diagnosing the Core Problems

Once you've sorted your notes and identified the patterns, the next step is diagnosis, figuring out the root cause of what readers noticed. A reader who says "the middle drags" might be responding to pacing, or to a subplot that doesn't earn its space, or to a protagonist who stops making active choices for forty pages. These are different problems with different solutions, and collapsing them into "fix the middle" will send you in circles.

This is exactly the kind of thinking a chat model can help with. You bring the reader observation, the model helps you generate hypotheses, and you evaluate them against your knowledge of your own manuscript. Think of it as talking through the problem with a well-read friend who has no ego invested in your book. For fiction writers, especially novelists, these diagnostic conversations can save hours of aimless rewriting.

Use this prompt when a reader flagged a character as unconvincing but didn't explain why. It works particularly well for literary fiction and character-driven commercial fiction. For memoir, swap "character" for the name of a real person you've rendered on the page, and specify that this is nonfiction so the model keeps its suggestions grounded in plausible human behavior rather than story archetype.

Use this next prompt when multiple readers said the pacing felt off in a specific section, but you can't tell whether the problem is scene length, lack of tension, or something structural. Poets working on a collection rather than a single poem can adapt this by substituting "section" for "sequence" and asking about momentum between pieces instead of narrative tension.

Use this third prompt when your notes contain contradictory feedback - one reader loved something another reader hated. This happens constantly with voice, with morally complex protagonists, and with endings. It's one of the genuinely hard parts of working with multiple beta readers, because both responses can be valid and still point in opposite directions. This prompt helps you think through whether you're facing a polarizing-but-intentional choice or a genuine craft problem that landed differently for different readers.

Building the Revision Plan: Workflow Prompts and Sequencing

Diagnosis is only half the work. The other half is sequencing, deciding what to fix first and in what order, so you're not revising the same pages four times because you worked from the bottom up. The classic mistake is starting with line edits when structural problems haven't been solved yet. You polish sentences that may not survive the next draft. That's demoralizing and inefficient, and it's the main reason revision feels endless.

A useful framework is to work from large to small. Structural issues first, scene-level issues second, paragraph and sentence work last. This sounds obvious but it's genuinely hard to practice when a clunky metaphor is sitting right in front of you and the structural problem is somewhere abstract in the middle of the book. Having a written revision plan, even a rough one, gives you something to return to when the urge to polish the opening paragraph strikes at two in the morning.

AI can help you build that plan, but only if you give it enough context to be useful. A vague prompt returns a vague plan. The more you tell the model about your manuscript's specific issues, the more useful its output. You're not outsourcing your thinking, you're using the model as a structured brainstorming surface. Then you edit the output down to the revision plan that actually fits your book and your working style.

For essayists and memoirists, the "structural" category looks different than it does for novelists. It might mean the order of sections, the balance between scene and reflection, or the coherence of your argument across pieces. The prompts below can be adapted by substituting essay-specific terms, and by asking the model to think about reader orientation and intellectual throughline rather than plot causality.

Use this prompt after your diagnostic work is done and you have a list of specific problems to address. It works across genres - just swap "novel" for "poetry collection," "essay collection," or "memoir" and specify the relevant structural concerns.

Use this prompt when you're working on a specific scene that multiple readers found weak, and you want to explore revision options before committing to an approach. This is particularly useful for dialogue-heavy scenes in fiction and memoir. For poetry, adapt it by describing a specific poem or stanza and asking for three different structural approaches rather than three scene strategies.

There's a version of this work that can feel like procrastination, where you're generating revision plans instead of revising. The check is simple: is your plan producing actual changes to actual pages? If you've spent three sessions in a chat window without touching the manuscript, step back. The plan exists to serve the writing, not replace it.

One last thing worth saying plainly: beta reader notes are filtered through each reader's reading history, preferences, and the particular afternoon they spent with your pages. Some of what they say will be exactly right. Some of it will be a misread. Some of it will point to a real problem but suggest the wrong solution. Your voice, your intention, and your sense of what the book is trying to be are the things no model can hold. You bring those. The tools help you think. The book is still yours to make.

No comments yet. Be the first to comment!